Let me be direct about something. Most of what you read about AI in automated testing sounds like a vendor pitch. Autonomous agents. Self-healing scripts. Zero maintenance. The marketing is ahead of reality. But the reality is still impressive. After 26 years in enterprise QA consulting, I have seen enough technology cycles to know when something is genuinely shifting the work. AI in test automation is one of those shifts. Not in the way the hype suggests, but in ways that matter daily to QA engineers and SDETs building real test suites under real delivery pressure.

This post breaks down what is actually happening, what the research says, what the tools do, and how to think about applying AI in automated testing without losing your mind or your account budget.

Key Stat

|

Table of Contents

ToggleWhat Is AI in Automated Testing?

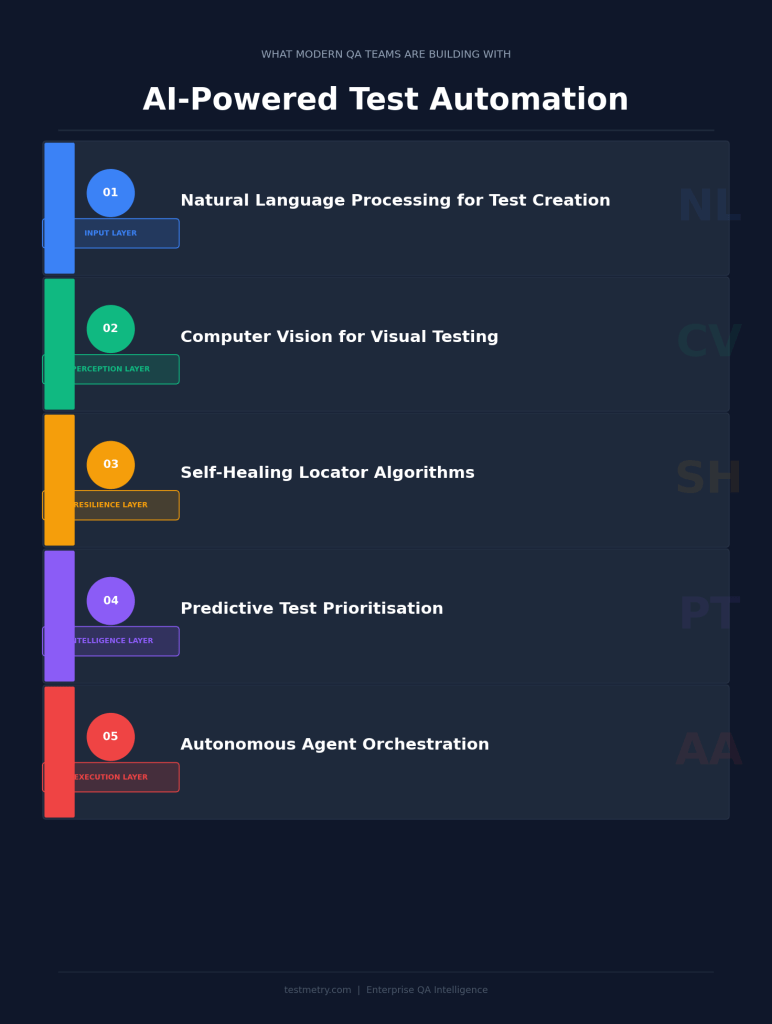

AI in automated testing refers to the use of machine learning, natural language processing, and intelligent algorithms to improve how software tests are created, maintained, executed, and analysed. It is not a single technology. It is a category of capabilities that different tools apply in different ways.

The distinction matters because teams often treat AI testing as a single thing. It is not. You need to be specific about which capability you are applying and what problem you are solving.

| AI Capability | What It Does | Real-World Value |

| Test Generation | Creates test cases from user stories, requirements, or recorded sessions using NLP | Faster test authoring, better coverage of edge cases |

| Self-Healing Tests | Detects when UI elements change and updates locators automatically | Reduces maintenance burden on regression suites |

| Predictive Analytics | Analyses historical data to predict which areas are most likely to fail | Smarter test prioritisation, faster feedback loops |

| Visual AI Testing | Compares screenshots intelligently to detect visual regressions | Catches rendering bugs that DOM-based tests miss |

| Agentic AI Testing | Autonomous agents that plan, execute, and adapt tests based on goals | Emerging fourth wave, still maturing in 2026 |

What the Research Actually Says About AI in Testing

Numbers get thrown around in this space without much context. Let me give you the ones that actually matter, with the source behind them.

World Quality Report 2025

|

Rainforest QA surveyed more than 600 software developers across the US, Canada, UK, and Australia. Three-quarters of teams using traditional code-based automation frameworks had adopted AI testing tools. But the early data showed a complicated picture: teams were not yet saving time on maintenance. Fast forward to 2025 and they are.

The takeaway is not that AI testing failed. The teams that adopted early were still learning how to integrate it. The ones who stayed patient are now seeing their investment pay off in reduced test maintenance cycles and faster authoring.

Tricentis research showed that 80% of software teams planned to use AI in testing by the end of 2025. Gartner projects that by 2028, 33% of enterprise software applications will include agentic AI capabilities, up from less than 1% in 2024.

The Four Waves of AI in Test Automation

Figure: AI in Automated Testing Waves

This framing comes from conversations with practitioners who have watched this space develop. It is the most useful mental model I have found for understanding where different tools fit.

Wave 1: Record and Playback with ML

Tools that record user actions and use machine learning to make those recordings more resilient. Early Selenium alternatives. Most of these have been absorbed into larger platforms or replaced by more capable tools.

Wave 2: AI-Assisted Test Authoring

Tools that suggest test cases, generate boilerplate from natural language inputs, and assist with test data creation. GitHub Copilot for test code falls here. Katalon with AI suggestions. Useful but still heavily human-directed.

Wave 3: Autonomous Self-Healing Systems

Tools that actively maintain test suites, detect breakage before it blocks the pipeline, and adapt to UI changes without human intervention. Testim, Mabl, Applitools, and Functionize sit here. This is where most enterprise adoption is concentrated in 2026.

Wave 4: Agentic AI Testing

Goal-oriented autonomous agents that plan the test approach, execute across environments, evaluate results, and adapt strategy based on what they find. This wave is emerging. Perfecto’s agentic AI features (released mid-2025) and similar efforts from other vendors are early examples. The fourth wave requires a different approach to governance and human oversight.

From Practice

|

How AI in Automated Testing Works: Core Mechanisms

Figure: AI in Automated Testing Working

Understanding the mechanisms helps you evaluate vendor claims with more precision. Most AI based automated testing tools use some combination of the following approaches.

1. Natural Language Processing for Test Creation

NLP allows testers to describe test scenarios in plain English, which the tool then converts into executable test scripts. Functionize and BlinqIO use this approach. The quality of the output depends heavily on how precisely you describe the scenario and how well the tool handles your application context.

2. Computer Vision for Visual Testing

Tools like Applitools use proprietary visual AI algorithms to compare screenshots. Unlike pixel-by-pixel comparison, visual AI understands context. It knows that a slightly different font size is not the same class of problem as a missing navigation element. This reduces false positives significantly in regression visual testing.

3. Self-Healing Locator Algorithms

When a UI element changes, traditional automation breaks because the locator no longer points to the right thing. Self-healing algorithms maintain multiple locator strategies for each element, detect when the primary locator fails, and automatically try alternatives. Better implementations also log what changed, so engineers can review decisions.

4. Predictive Test Prioritisation

ML models trained on historical test run data, code change patterns, and defect history can predict which tests are most likely to surface failures in a given build. This matters enormously for long test suites in CI/CD pipelines where running everything is not practical within a deployment window.

5. Autonomous Agent Orchestration

Agentic AI testing involves planning agents that decompose a high-level goal into specific test steps, execution agents that carry them out, and evaluation agents that assess the results and decide whether to continue, retry, or escalate. This multi-agent architecture is complex to govern but powerful for exploring unknown application territory.

Top AI Testing Tools QA Teams Are Using in 2026

I am not going to list 35 tools. You will not remember them. Here are the categories that matter, along with a representative tool in each.

| Tool | Primary Use Case | Best For | Key AI Feature |

| Applitools | Visual regression testing | Design-sensitive applications, banking, e-commerce | Visual AI for screenshot comparison |

| Mabl | End-to-end test automation | Agile teams, low-code preference | Self-healing, ML-driven stability |

| Testim | UI test automation | Teams wanting rapid test authoring with stability | AI-driven element identification |

| Functionize | Complex enterprise test automation | Large engineering teams, enterprise SaaS | NLP test authoring, adaptive learning |

| BlinqIO | AI virtual testers from Cucumber specs | Teams already using BDD, need 24/7 test coverage | Prompt engineering for test translation |

| Katalon | All-in-one: web, mobile, API, desktop | Teams wanting single platform coverage | AI-powered test recommendations |

| LambdaTest (Kane AI) | Cross-browser cloud testing | Teams needing broad browser/device coverage | Natural language test generation |

| Perfecto (Agentic) | Agentic runtime testing | Enterprises ready for fourth wave capabilities | Agentic AI runtime decision-making |

Where AI in Automated Testing Actually Saves Time (And Where It Does Not)

Where AI Genuinely Helps

- Test maintenance reduction. Self-healing handles the single most frustrating part of automation at scale. Locator-based failures drop significantly with mature self-healing tooling.

- Coverage expansion. AI-generated tests from user stories capture edge cases that manual test case design misses, particularly around data combinations and boundary conditions.

- Faster authoring for known patterns. NLP test authoring accelerates the creation of initial tests for CRUD operations, form validation, and navigation flows.

- Visual regression at scale. Running visual checks across hundreds of pages and viewpoints manually is not feasible. Visual AI makes it routine.

- Test data generation. AI-assisted test data creation handles the privacy, variety, and volume problems that manual test data management struggles with.

Where AI Struggles or Creates New Problems

- Requirements quality. AI test generation is only as good as the inputs. Ambiguous requirements produce ambiguous tests. The TestGuild survey found that starting with requirements, not test generation, is the expert consensus advice for 2026.

- Agentic governance. Autonomous agents making testing decisions need oversight structures that most QA teams have not built yet. Logging agent decisions, reviewing agent reasoning, and defining intervention thresholds are non-trivial problems.

- Context and business logic. AI tools do not understand the business. A self-healing test that updates its locator may now be pointing to the wrong thing in a business logic sense, even though it passes. Human review of self-healing decisions matters.

- Team skills gaps. The World Quality Report found that 50% of organisations cite skills gaps as a barrier to AI adoption in QA. AI testing tools require a range of skills, not just automation skills.

The Honest Assessment

|

How to Start With AI in Automated Testing: A Practical Path

Based on what I see working across enterprise QA engagements, here is a path that avoids the common mistakes.

- Audit your current maintenance burden first. If your team spends more than 30% of automation time on maintenance, self-healing is your priority. That is the pain AI solves most reliably today.

- Pick one tool for one pain point. Run a trial with the same 5 test scenarios across two tools. Compare maintenance effort, not just initial authoring speed. Long-term stability matters more.

- Build governance before you scale. Define what AI can do autonomously and what requires human sign-off. Log agent and self-healing decisions. Review them in sprint retrospectives. You need visibility before you need scale.

- Invest in AI literacy across the team. Every tester needs enough AI understanding to evaluate tool outputs critically. This is not optional if you want to use AI responsibly in a QA context.

- Connect testing to requirements, not just code. The expert consensus from TestGuild’s 50+ interviews is consistent: the ROI from AI testing multiplies when requirements quality improves first. Garbage in, garbage out applies to test generation as much as anywhere.

Frequently Asked Questions About AI in Automated Testing

Does AI in automated testing replace QA engineers?

No. AI handles the repeatable, pattern-based, and maintenance-heavy parts of testing. It does not replace the judgment, domain knowledge, and exploratory skills that experienced QA engineers provide. The role changes, it does not disappear. Teams that frame this as replacement create resistance and miss the actual opportunity.

What is self-healing test automation?

Self-healing test automation is the ability of an AI-powered testing tool to detect when a test locator or element reference breaks due to an application change and automatically update or replace it to keep the test running. The best implementations maintain multiple locator strategies and log their decisions for human review.

How does AI generate test cases automatically?

AI test generation typically uses natural language processing to parse requirements, user stories, or recorded application behaviour and translate them into executable test scenarios. The quality depends on the clarity of the inputs. NLP-based tools like Functionize and BlinqIO enable testers to describe scenarios in plain English and generate automated code from those descriptions.

What is the difference between AI in automated testing and traditional automation?

Traditional automation follows deterministic, script-based logic. If the application changes, the script breaks and a human must fix it. AI in automated testing uses machine learning to adapt to changes, predict failures, and dynamically make decisions about test execution. The key advantage is resilience and adaptability. The key challenge is governance and trust in AI decision-making.

Is AI in automated testing safe for regulated industries?

It depends on the tool and the use case. Self-healing and visual testing pose a lower risk than fully autonomous, agentic testing in regulated environments. The World Quality Report identifies data privacy (67%) and integration complexity (64%) as the top barriers in enterprise AI testing adoption. Regulated teams need clear audit trails of test decisions, which the better tools now provide.

What are the most common mistakes when adopting AI testing tools?

The most common mistakes are: trying to automate everything at once rather than solving one specific pain point first; skipping governance frameworks for AI decisions; not investing in team AI literacy; and expecting AI to compensate for poor requirements quality. The teams succeeding with AI testing treated it as a process change, not just a tool purchase.

Which AI tool is best for automated testing?

There is no single best tool. Applitools leads for visual testing. Mabl and Testim are strong choices for self-healing UI automation in agile teams. Functionize suits complex enterprise scenarios. Katalon provides the broadest platform coverage. The right choice depends on your primary pain point, stack, and team skills. Run trials before committing.

Final Thoughts on AI in Automated Testing

AI in automated testing is not hype. Neither is it magic. It is a set of specific capabilities that solve specific problems in the testing workflow.

Self-healing reduces maintenance. Test generation accelerates authoring. Predictive analytics sharpens prioritisation. Visual AI catches what DOM testing misses. Agentic AI is emerging as the next frontier.

The teams getting real value from AI testing in 2026 share three things: they started with a specific pain point, not a broad transformation mandate. They built governance alongside tooling. And they treated human judgment as a feature, not a workaround.

If you are building or running a QA function and wondering where to start, start with maintenance. It is the highest daily cost of test automation, and self-healing AI has a proven track record of reducing it. Everything else can follow.

| About the Author

George Ukkuru is Practice Director for Quality Engineering and Automation at Speridian Technologies, with 26 years of enterprise QA consulting experience. He is the founder of testmetry.com, author of Software Test Estimation Simplified, and host of the Automation Hangout podcast. He speaks at Automation Guild, TestFlix, BrowserStack summits, and BrightTALK. |