If you’ve been in QA for more than five minutes, you’ve heard the pitch: AI test generators write your tests for you. No coding. No fuss. Just plug in your app and watch coverage appear. I’ve been in enterprise QA for 26 years. I’ve watched vendors make this exact claim at least a dozen times since the early days of automation.

So let me tell you what’s real about AI test generators in 2026, what’s still hype, and which tools are actually worth your time.

Table of Contents

ToggleWhat Is an AI Test Generator?

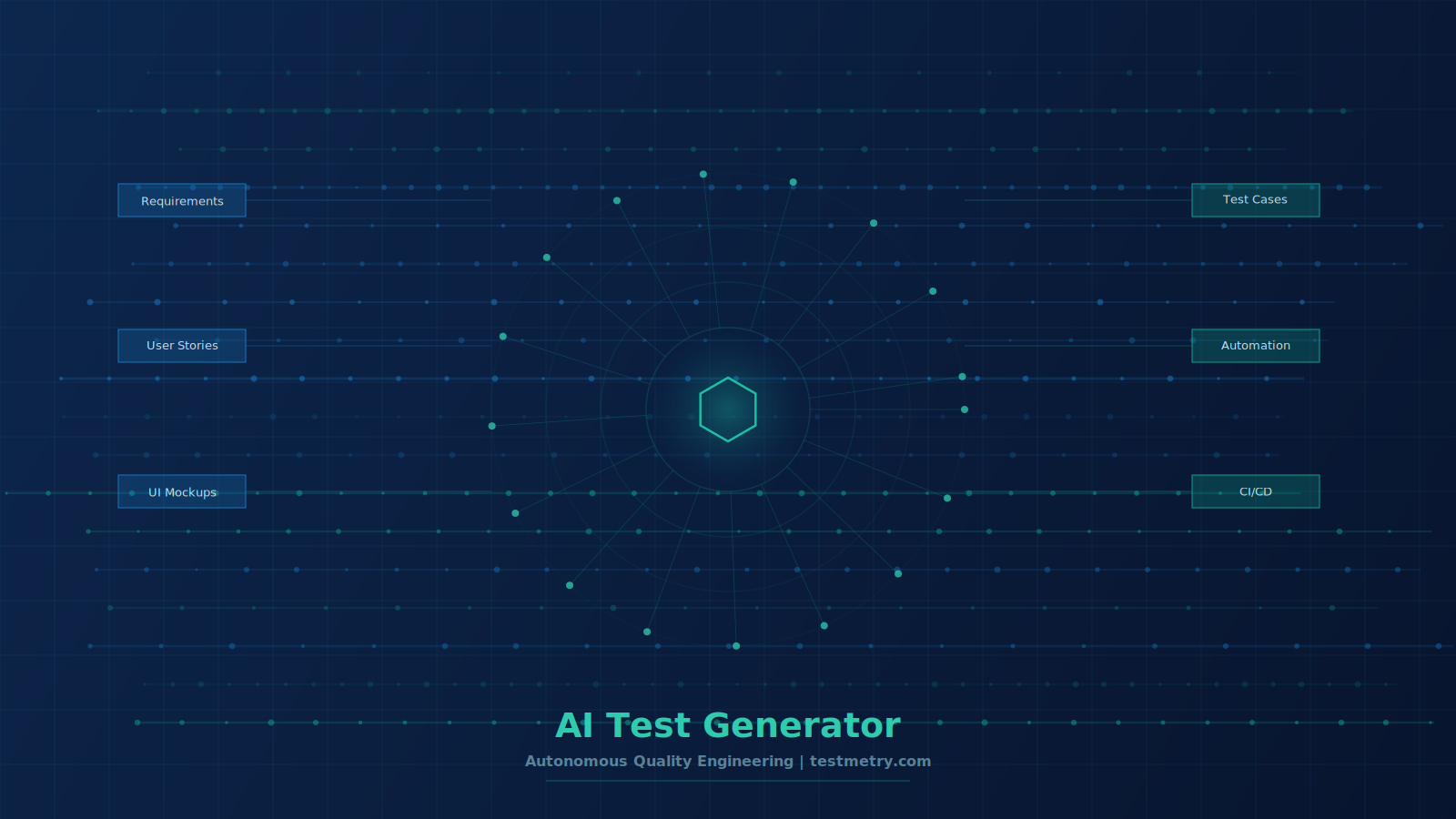

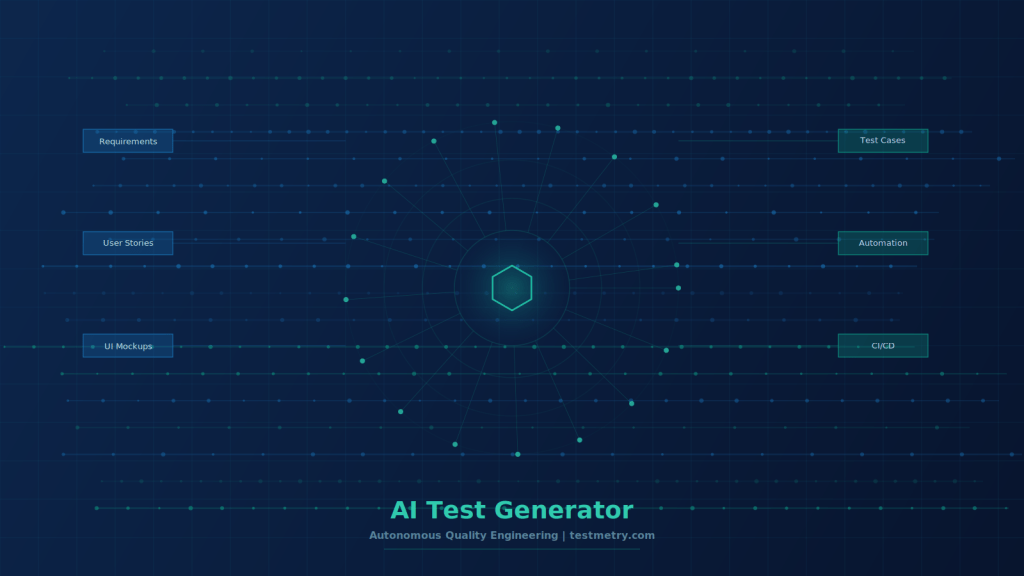

An AI test generator is a tool that uses machine learning, large language models (LLMs), or natural language processing (NLP) to automatically generate test cases, test scripts, or test data from inputs such as requirements, user stories, application URLs, UI mockups, or existing code.

The keyword here is ‘automatically.’ You’re not writing test steps line by line. The AI analyzes your application or requirements and builds the test logic for you.

That’s the promise. The reality is more nuanced, and I’ll get into that shortly.

Key distinction: There are two types of AI test generators. Ones that assist humans in writing tests faster (AI-assisted), and ones that generate and run tests without human authoring (autonomous). They are not the same thing. Most tools are the former.

How Does an AI Test Generator Work?

Here’s the technical layer most blog posts skip. AI test generators don’t work like magic. They follow a fairly consistent process under the hood.

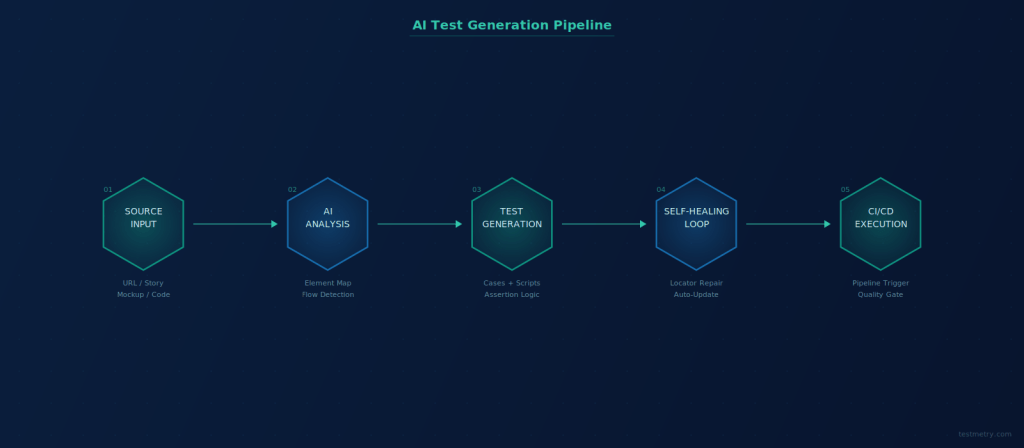

The AI ingests a source — your app’s URL, a user story, a UI screenshot, or requirement text. It then maps the application structure: buttons, forms, flows, input fields, and page navigation paths.

From that structure, it generates test scenarios, assigns expected outcomes, and produces executable scripts or natural language test steps. More advanced tools also generate test data on the fly and automatically attach assertions.

The key technical components making this work today:

- LLMs trained on millions of code repositories and test suites understand context and can generate assertions, not just steps

- NLP processing converts plain English instructions into executable test scripts

- Computer vision and DOM analysis allow the AI to understand UI elements without relying on brittle XPath selectors

- Self-healing algorithms detect when an element locator breaks and automatically remap it to the correct element

The self-healing piece is where the real enterprise value sits. Traditional Selenium scripts break the moment a developer renames a button. A good AI test generator notices the change and repairs the locator without human intervention.

Why QA Teams Are Switching to AI-Generated Tests

The math is brutal if you’re still doing manual test authoring at scale. Studies show teams spend up to 80% of their automation effort maintaining existing tests, not writing new coverage. That leaves almost nothing for keeping pace with development velocity.

AI test generators flip that ratio. The tools handle maintenance. Your engineers focus on coverage strategy, edge case design, and risk analysis.

Measured outcomes from enterprise teams in 2025-2026:

- 9x faster test creation compared to manual authoring

- Up to 88% reduction in test maintenance effort

- 10x increase in test coverage within the same sprint capacity

- 30% fewer bugs reaching production after six months on AI-assisted platforms

I’ve seen similar numbers in client deployments. The caveat is always the same: these results require proper setup, a well-structured test strategy, and human oversight on what the AI generates.

The AI doesn’t know your business logic. You do. That’s the partnership.

The Top AI Test Generator Tools in 2026

I’ve evaluated these tools across real client environments. Here’s the honest breakdown, not the vendor brochure version.

1. Virtuoso QA

Built AI-native from the ground up. That matters. Most competitors bolted AI onto a legacy test recorder. Virtuoso designed NLP, ML, and self-healing into the core architecture.

Their self-healing acceptance rate is above 95% in production use. Tests authored in plain English. No coding required from the QA team. Integrates with Jira, Jenkins, Azure DevOps, and BrowserStack without friction.

Best for: Enterprise teams who need non-technical stakeholders involved in test authoring.

2. Testim

Strong machine learning for element identification. Their AI locators learn your application over time, making tests progressively more stable. Supports web, mobile, and Salesforce testing.

Teams report 50% faster test authoring compared to traditional tools. Bug rates dropped 30% in long-term deployments.

Best for: Technical QA teams doing E2E testing on complex web applications.

3. testRigor

Plain English test authoring that actually works at scale. You describe what the end user does and testRigor figures out how to execute it. Tests mirror user behavior, not internal selectors.

Gartner named them a Cool Vendor in 2023. The approach makes tests resilient to UI changes by design.

Best for: Teams who want readable tests that non-developers can interpret and update.

4. ACCELQ Autopilot

Autonomous discovery, generation, and execution in one flow. The AI analyzes your application, discovers test scenarios you didn’t think to write, generates modular test components, and self-heals as the app evolves.

Full-stack coverage across web, API, mobile, desktop, and mainframe. Rare combination for enterprise QA.

Best for: Large QA organizations running multi-platform testing at enterprise scale.

5. CloudQA

Free tier makes this accessible for smaller teams. Generates test cases from a live URL, UI mockup image, or wireframe. Google Cloud Vision AI powers the element recognition.

Not the deepest AI on this list, but the barrier to entry is the lowest. Good entry point for teams new to AI-generated testing.

Best for: Smaller teams or teams evaluating AI test generation for the first time.

6. GitHub Copilot

Not a standalone test generator, but worth mentioning. Copilot suggests test code as you write it inside your IDE. It’s AI-assisted, not autonomous.

The GitHub survey showed 70% of developers say Copilot improves code quality and completion time. For test code specifically, it accelerates authoring without replacing the tester’s judgment.

Best for: Developer-led teams that write automated tests with frameworks like Playwright or Cypress.

What AI Test Generators Still Cannot Do

This is the part the vendors leave out of their pitch decks.

AI test generators do not understand your business rules unless you tell them. They don’t know that a discount code should be used only once per customer. They don’t know that a user with a pending KYC review shouldn’t access premium features.

They generate tests based on observed behavior and structural analysis. If your application has a logic defect baked in from the start, the AI will generate tests that pass against that defect.

AI-generated tests validate what your application does. You still need human testers to validate what your application should do. That distinction has not changed. It will not change.

- Edge cases with complex business logic still require manual test design

- Security and penetration testing remain human-led disciplines

- Exploratory testing cannot be automated away

- Regulatory compliance testing requires human sign-off in most industries

- AI-generated tests require human review before they enter regression suites

AI Test Case Generator vs. AI Test Generator: Are They the Same?

No, and this distinction matters when you’re evaluating tools.

An AI test case generator produces documentation: test case IDs, steps, expected results, and test data in a format humans can review and track. Tools like the AI Test Case Generator for Jira or CloudQA’s generator work this way.

An AI test generator (in the automation context) produces executable test scripts or automation code that runs directly in your CI/CD pipeline, without human intervention during execution.

Some tools do both. Knowing which category you need saves you a lot of time in the vendor evaluation process.

How to Pick the Right AI Test Generator for Your Team

I’ve helped hundreds of teams pick automation tools. The evaluation framework I use hasn’t changed much.

Start with these four questions:

- Who will author the tests — developers, QA engineers, or business analysts? That determines how much no-code capability you need.

- What is your primary test scope — web, API, mobile, desktop, or all of the above?

- Do you need the AI to generate tests autonomously, or is AI-assisted authoring enough?

- What does your CI/CD pipeline look like? Jenkins, Azure DevOps, GitHub Actions — verify native integration exists before committing.

Don’t evaluate AI test generators in a vacuum. Run a proof of concept on a real application with real user stories. Give the tool two weeks. Measure test creation time, self-healing events, and first-pass execution stability.

Numbers from a controlled POC beat any vendor case study.

Integrating an AI Test Generator into Your CI/CD Pipeline

This is where enterprise deployments either succeed or quietly die. The tool itself is only half the equation.

Most AI test generators support API triggers and webhook integrations. The typical integration looks like this: a commit hits your repo, your CI pipeline triggers, the test generator runs the relevant test suite, results feed back to Jira or Azure DevOps, and the team gets a quality gate decision before deployment.

The gap I consistently see in enterprise clients: teams buy the tool but don’t define their quality gates. They run AI-generated tests but never set a pass threshold that blocks a release. The whole thing becomes a reporting exercise rather than a quality control mechanism.

An AI test generator without a quality gate is expensive noise. Define your coverage threshold and failure conditions before you write the first test.

For a deeper look at how automation connects to DevOps delivery pipelines, the DORA State of DevOps Report is worth reading. It has multi-year data on how testing practices correlate with deployment frequency and change failure rates.

AI Test Generators and the Cost of Test Maintenance

Here’s the business case that convinces the CFO, not just the QA lead.

Traditional test automation breaks every time the UI changes. Development teams move fast. They rename buttons, restructure the navigation, and push layout changes without considering the test suite.

Each of those changes triggers a maintenance cycle. Someone has to find the broken test, diagnose the locator failure, update the selector, re-run the suite, and confirm it’s clean. At scale, that’s weeks of engineering time per sprint.

Self-healing AI test generators automatically absorb those changes. The AI detects that the element has shifted, remaps the locator, and continues execution. Virtuoso reports user acceptance of self-heals above 95%. Teams report 85% reduction in maintenance costs.

The ROI case writes itself once you quantify the number of engineer-hours spent on manual maintenance today.

Frequently Asked Questions

1. What is an AI test generator in software testing?

An AI test generator is a tool that uses machine learning, large language models, or natural language processing to automatically create test cases, test scripts, or automation code. It takes inputs like requirements, user stories, or application URLs and generates executable tests without requiring manual step-by-step authoring from a QA engineer.

2. Can AI test generators replace manual testers?

No. AI test generators automate test creation and maintenance. They do not replace the judgment a human tester brings to exploratory testing, business logic validation, edge case identification, or regulatory compliance testing. The best teams use AI generation to handle volume while freeing human testers for high-value thinking work.

3. What is the difference between AI-assisted and autonomous AI testing?

AI-assisted testing uses AI to help humans write tests faster through suggestions, code completion, or plain-language authoring. Autonomous AI testing generates, executes, and maintains tests with minimal human involvement. Most commercial tools today are AI-assisted. True autonomous testing is still maturing but platforms like ACCELQ Autopilot and BlinqIO are pushing in that direction.

4. How do self-healing tests work in AI test generators?

Self-healing uses machine learning to track multiple identifiers for each UI element — visual appearance, DOM structure, element description, and positional context. When a single identifier changes due to a UI update, the AI uses the remaining identifiers to locate the correct element and automatically updates the broken locator. This removes the need for manual test maintenance after UI changes.

5. Are there free AI test generators for software testing?

Yes, several platforms offer free tiers. CloudQA allows test case generation from a URL or mockup at no cost. GitHub Copilot includes test code suggestions in its standard subscription. Most enterprise-grade tools, like Testim, Virtuoso QA, and ACCELQ, offer free trials but no permanent free plans. The free tools work for evaluation but will hit limits quickly at production scale.

6. How accurate are AI-generated test cases?

Accuracy depends heavily on the quality of the input. Clear user stories with defined acceptance criteria produce significantly more accurate test cases than vague feature descriptions. Enterprise platforms like Virtuoso QA report conversion rates of 85%+ in trials. You should always review AI-generated tests before adding them to a regression suite. Never blindly trust generation output, regardless of the tool.

About the Author

George Ukkuru is Practice Director for Quality Engineering and Automation at Speridian Technologies. He has 26 years of enterprise QA consulting experience working with Fortune 500 and Global 1000 clients across financial services, healthcare, and technology sectors. He is the founder of testmetry.com, author of ‘Software Test Estimation Simplified,’ and a recognized speaker at Automation Guild, BrowserStack summits, and TestFlix. He hosts the Automation Hangout podcast and advises enterprise teams on adopting AI-driven quality engineering.